FDA Halts AI Diagnostic Rollout Over ‘Black Box’ Bias: The Future of Health Tech on Hold

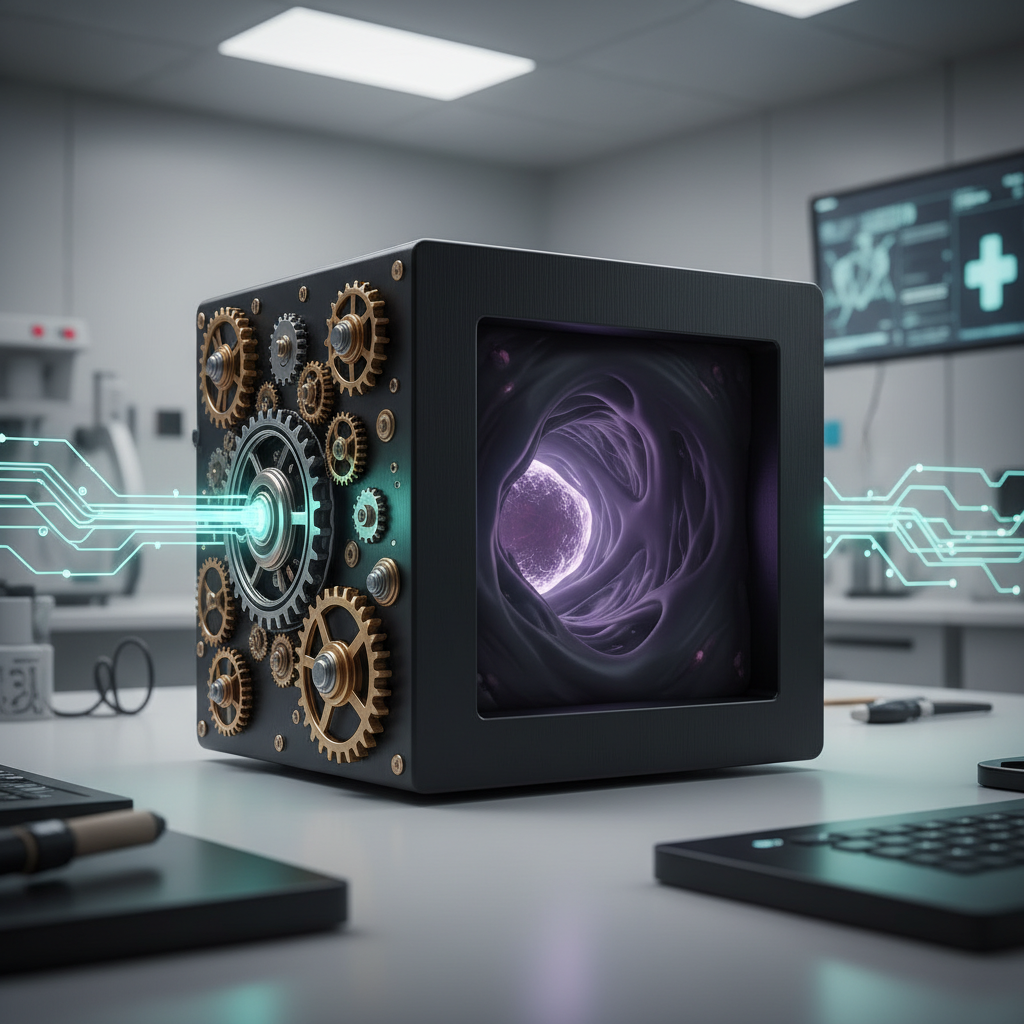

A significant tremor just rattled the rapidly expanding world of medical artificial intelligence. The U.S. Food and Drug Administration (FDA) recently slammed the brakes on a highly anticipated AI-powered diagnostic tool, citing serious concerns over what it termed ‘black box’ bias. This isn’t just a technical snag; it’s a monumental moment that forces us to confront the profound ethical and practical challenges facing AI in medicine and the future of health tech.

Think about it: an algorithm designed to aid in critical diagnoses, one that could revolutionize how we detect diseases, is now under intense scrutiny. Why? Because the very data it was trained on might be inherently flawed, potentially leading to discriminatory outcomes. This move by the FDA AI regulators signals a stark realization: the immense promise of AI must be tempered with an equally immense commitment to equity, transparency, and patient safety.

Table of Contents

- Key Takeaways

- The FDA’s Stance: Why the ‘Black Box’ Became a Red Flag

- AI in Medicine: A Promise and Its Perils

- Understanding AI Bias: More Than Just an Algorithm Glitch

- Navigating the Future of Health Tech: A Path Forward

- What This Means for Patients and Providers

- The Broader Implications for Medical AI Controversy

- Frequently Asked Questions About FDA AI and Bias

Key Takeaways

- The FDA has paused a major AI diagnostic tool rollout due to ‘black box’ bias, marking a critical regulatory intervention.

- AI bias stems primarily from unrepresentative training data, leading to skewed diagnostic outcomes for certain demographic groups.

- The decision highlights the urgent need for greater transparency (Explainable AI – XAI) and diverse datasets in AI in medicine development.

- This setback underscores a global push for robust regulatory frameworks to ensure ethical and equitable diagnostic technology.

- The future of health tech future hinges on developers and regulators collaborating to build trust and eliminate systemic biases.

The FDA’s Stance: Why the ‘Black Box’ Became a Red Flag

The recent announcement from the FDA was direct and, for many in the industry, a much-needed jolt. The agency effectively halted a rollout of a groundbreaking AI-powered diagnostic technology, stating clearly that the inherent ‘black box’ nature of its decision-making process, coupled with evidence of bias, made it unacceptable for widespread clinical use. This isn’t an anti-innovation stance; it’s a pro-patient safety declaration. And frankly, it’s excellent.

Unpacking the “Black Box” Problem

What exactly is a ‘black box’ in the context of AI? Simply put, it refers to an AI system whose internal workings are so complex, or proprietary, that even its creators struggle to explain precisely how it arrived at a specific conclusion. You feed it data, it spits out an answer. But the steps in between? Opaque. In many applications, that’s fine. But in medicine, where a diagnosis can mean life or death, this opaqueness becomes a profound ethical and safety concern. How do you trust a system you can’t understand?

Consider a scenario where an AI flags a patient for a serious condition. If that diagnosis is incorrect, and the system can’t articulate why it made that call, clinicians are left in the dark. This lack of interpretability isn’t just frustrating; it can undermine medical judgment and delay appropriate interventions. It challenges the very foundation of evidence-based medicine.

The Ethical Imperative: Addressing AI Bias in Healthcare

Here’s the thing: the ‘black box’ issue is exacerbated dramatically when coupled with AI bias. This particular AI diagnostic tool, from what we understand, exhibited differential performance across various demographic groups. Perhaps it was less accurate for women, or for specific racial or ethnic minorities, or for patients from lower socioeconomic backgrounds. This isn’t theoretical; studies have repeatedly shown how algorithmic bias can perpetuate and even amplify existing health disparities.

The FDA’s action highlights a critical ethical imperative: health equity. We cannot allow technological advancements, no matter how promising, to inadvertently create a two-tiered healthcare system where some populations receive less accurate, or even harmful, diagnoses simply because of algorithmic oversights. The stakes are simply too high for this kind of oversight.

Precautionary Principle in Practice: A Timely Intervention

This decision by the FDA AI oversight body showcases the precautionary principle in action. When there is a potential for harm, especially when dealing with human health, it’s prudent to pause and investigate thoroughly before widespread deployment. The FDA isn’t killing innovation; it’s demanding responsible innovation. This intervention, while disruptive for the developer, is a crucial step toward building a more trustworthy and equitable future for AI in medicine.

But make no mistake, this isn’t an isolated incident. Concerns about AI bias and explainability have been mounting for years across various sectors, from finance to criminal justice. The medical field, however, carries a unique weight. The direct impact on human lives means that standards for accountability and transparency must be even higher.

AI in Medicine: A Promise and Its Perils

It’s easy to get swept up in the negativity when news like this breaks. But let’s not forget the incredible potential that AI in medicine holds. From accelerating drug discovery to personalizing treatment plans, the applications are vast and genuinely exciting. Many experts believe AI could unlock breakthroughs that would be impossible for human physicians alone.

The Transformative Potential of Diagnostic Technology

Imagine AI systems that can sift through millions of medical images, X-rays, MRIs, pathology slides, identifying subtle anomalies that even the most experienced human eye might miss. Or AI that analyzes genomic data to predict disease risk with unprecedented accuracy, enabling truly preventive care. This kind of advanced diagnostic technology promises earlier detection, more precise treatments, and ultimately, better patient outcomes. Early successes have been nothing short of phenomenal.

For example, some AI tools have already shown promise in detecting certain cancers at earlier stages than traditional methods, or in helping ophthalmologists identify retinal diseases. These tools augment human capabilities; they don’t replace them. They offer a future where clinicians have an unparalleled assistant, a tireless second opinion, available at a moment’s notice.

Where AI Falls Short: Real-World Disparities

However, the real world is messy. And here’s where the promise often runs into the perils. The FDA’s recent halt is a stark reminder that if AI systems are trained on data that doesn’t accurately represent the diverse patient population they are meant to serve, their performance will inevitably falter for underrepresented groups. The underlying issue is often less about the algorithm itself and more about the historical inequities embedded in our data collection.

Think about how medical data has been historically collected. It often overrepresents certain populations, typically white males, and underrepresents others. When an AI learns from this skewed historical data, it learns those same biases. And when it’s deployed, it can then make less accurate predictions or diagnoses for the very groups that were already underserved. This creates a dangerous feedback loop, deepening existing disparities rather than alleviating them.

The conversation around cutting-edge health devices also extends to personal monitoring. Our recent article, “Smart Contact Lenses with AR & Health Diagnostics: Future Vision or Privacy Nightmare?”, touches on similar themes of balancing innovation with ethical considerations, albeit in a different context.

Understanding AI Bias: More Than Just an Algorithm Glitch

When we talk about AI bias, it’s crucial to understand that we’re not just discussing a minor bug or an unfortunate oversight. We’re talking about fundamental issues in how these systems are designed, trained, and deployed. It’s a complex problem with deep roots.

Data Collection and Representation: The Root of the Problem

The primary culprit behind AI bias is almost always the data. Machine learning models, which underpin most AI applications, are only as good as the data they learn from. If the datasets used to train these models are incomplete, imbalanced, or reflect societal biases, the AI will internalize those flaws. For instance, if an AI diagnostic tool for skin conditions is primarily trained on images of lighter skin tones, it may perform poorly or even misdiagnose conditions on darker skin tones.

This isn’t malicious intent; it’s a systemic issue. Historically, clinical trials and medical research have often lacked diversity, leading to a wealth of data that simply doesn’t represent the full spectrum of humanity. And if your AI learns from a biased slice of humanity, it will struggle to serve everyone fairly.

Amplifying Existing Health Disparities

The consequence of this data-driven bias is the amplification of existing health disparities. Populations that have historically faced barriers to healthcare access, or whose health data has been underrepresented, are precisely the ones most at risk from biased AI systems. This could lead to delayed diagnoses, misdiagnoses, or inappropriate treatments for these vulnerable groups, further widening the gap in health outcomes.

It’s a critical ethical challenge, one that demands a proactive and multi-faceted approach. We simply cannot afford to ignore these issues, hoping they will resolve themselves. The health tech community has a responsibility to ensure their innovations uplift all, not just a select few.

Comparison: Traditional Diagnostics vs. AI-Powered Diagnostics

Let’s take a quick look at how traditional diagnostic methods stack up against the promise and peril of AI-powered solutions, especially concerning bias.

| Feature | Traditional Diagnostics (e.g., human interpretation of images) | AI-Powered Diagnostics (e.g., algorithmic image analysis) |

|---|---|---|

| Speed & Volume | Limited by human capacity, slower for large datasets. | Extremely fast, can process vast amounts of data quickly. |

| Consistency | Can vary between practitioners due to human factors (fatigue, experience). | High consistency in applying learned patterns, less subject to human error/fatigue. |

| Bias Potential | Human bias (conscious/unconscious) can influence interpretation. | Algorithmic bias from skewed training data can lead to systemic errors for underrepresented groups. |

| Explainability | Clinicians can articulate their reasoning and thought process. | Often a “black box”; difficult to trace the exact reasoning for a diagnosis. |

| Data Learning | Learns from experience, new research, and professional development. | Learns from massive datasets; requires diverse and clean data to be effective. |

The table clearly illustrates the double-edged sword of AI in medicine. While AI offers unparalleled efficiency and consistency, its inherent biases and lack of explainability pose significant challenges that traditional methods, for all their human limitations, often navigate differently. For those keen to delve deeper into the ethical frameworks guiding AI, a fantastic resource is “The Ethical Algorithm: The Science of Socially Aware Algorithm Design,” which explores how to build AI systems that are fair and transparent from the ground up.

Navigating the Future of Health Tech: A Path Forward

This FDA intervention isn’t the end of medical AI controversy; it’s a pivotal turning point. It’s a call to action for developers, regulators, and healthcare providers alike to chart a more responsible course for the future of health tech future. And let’s be honest, the path forward won’t be easy, but it is absolutely necessary.

The Need for Transparency and Explainable AI (XAI)

One of the most crucial elements moving forward is the demand for greater transparency. We need to shift from ‘black box’ AI to Explainable AI (XAI). XAI systems are designed not just to provide an answer, but also to articulate how they arrived at that answer. This means revealing the factors and data points that most influenced a diagnosis, allowing clinicians to understand, verify, and ultimately trust the AI’s recommendations.

Implementing XAI will require significant research and development. It’s a complex undertaking that often means trading off some degree of predictive power for interpretability. But in healthcare, where human lives are on the line, that trade-off is more than justified. The ability to understand why an AI made a certain decision is paramount for accountability and continuous improvement.

Diverse Data Sets and Collaborative Development

Addressing bias fundamentally means tackling the data problem. Developers must move beyond conveniently available, often skewed, datasets and actively seek out and build truly diverse and representative training data. This includes data from varied demographic groups, different geographical regions, and a wide range of clinical presentations.

This isn’t a task for tech companies alone. It requires deep collaboration with healthcare institutions, patient advocacy groups, and governmental bodies to ensure ethical data collection and sharing practices. Projects focusing on federated learning, where AI models learn from decentralized data without direct sharing of sensitive patient information, could also play a significant role here. Organizations like the National Institutes of Health (NIH) are already spearheading initiatives to build more inclusive health datasets.

What This Means for Patients and Providers

This FDA ruling isn’t just about algorithms and regulations; it has profound implications for the people at the heart of healthcare: patients and providers. It challenges us to reconsider how we integrate advanced diagnostic technology into clinical practice responsibly.

Rebuilding Trust in Diagnostic Technology

For patients, the news of AI bias can be unsettling. It raises legitimate questions about whether these sophisticated tools are truly working for everyone. The FDA’s firm stance, however, can paradoxically help rebuild trust. By showing that regulators are vigilant and willing to intervene when bias is detected, it assures the public that patient safety remains paramount. Transparency and clear communication about AI’s limitations and strengths will be crucial in fostering this trust.

Patients need to know that the tools used in their care are fair and accurate, regardless of their background. This requires not just technical solutions, but also educational initiatives to help the public understand how AI works and what protections are in place. If you’re looking for robust solutions for securing patient health data in AI systems, the “MedSecure AI Data Protection Suite” offers advanced encryption and compliance features that many hospitals are now adopting.

Empowering Healthcare Professionals with Better Tools

For healthcare providers, this moment offers an opportunity to shape the future of their tools. Clinicians are on the front lines; their feedback on AI performance in diverse patient populations is invaluable. They need AI systems that are not only accurate but also interpretable, allowing them to exercise their professional judgment and intervene when necessary. This isn’t about AI replacing doctors, but about AI augmenting their capabilities in a safe and ethical manner.

Furthermore, this incident underscores the importance of continuous education for healthcare professionals on AI in medicine, including understanding its limitations and potential pitfalls. Knowing how to critically evaluate AI-generated insights is becoming an essential skill in modern practice. As part of a broader wellness approach, consider reviewing foundational health practices. Our article, “10 Essential Preventive Tests and Checkups After 50, 2026 Guide,” provides excellent insights into proactive health management, which AI diagnostics will eventually integrate with seamlessly.

The Broader Implications for Medical AI Controversy

This specific FDA action sends ripples far beyond a single diagnostic tool. It’s a bellwether for the entire health tech future and a significant marker in the ongoing medical AI controversy. The regulatory landscape is shifting, and companies that fail to prioritize ethical AI development will find themselves increasingly out of step.

Regulatory Scrutiny: A Global Trend?

What we’re witnessing is not unique to the United States. Regulatory bodies worldwide are grappling with how to effectively govern AI, especially in high-stakes sectors like healthcare. The European Union, for example, is already moving forward with its comprehensive AI Act, which classifies AI systems by risk level and imposes stringent requirements for high-risk applications, including those in medicine. This FDA move suggests a growing global consensus: AI governance is no longer optional; it’s essential.

Companies developing AI diagnostic tools should anticipate increased scrutiny, not just on efficacy but also on fairness, transparency, and data provenance. Proactive engagement with regulatory guidelines and independent audits for bias will become standard practice, not an afterthought. The bottom line is that self-regulation won’t cut it anymore.

Innovation vs. Safety: Finding the Balance

Some might argue that strict regulation stifles innovation. But here’s the counterpoint: irresponsible innovation can lead to catastrophic failures, eroding public trust and ultimately hindering adoption. The challenge for regulators and developers alike is to find that delicate balance between fostering rapid advancement and ensuring robust safety and ethical standards. This isn’t about choosing one over the other; it’s about making them interdependent.

Successful innovation in AI in medicine will be defined not just by technological prowess, but by its ethical foundation and its proven ability to serve all patients equitably. This incident serves as a powerful reminder that while speed is tempting, thoroughness and ethical rigor are non-negotiable in healthcare. For anyone interested in understanding the regulatory complexities and best practices for developing ethical AI, the book “Governing Medical AI: Ethical, Legal, and Regulatory Challenges” provides a detailed exploration of the subject.

Frequently Asked Questions About FDA AI and Bias

What does the FDA halting an AI diagnostic mean?

It means the FDA has found significant concerns, primarily related to ‘black box’ issues and evidence of bias, with a specific AI-powered diagnostic tool, deeming it not ready for clinical rollout. This mandates further development and transparency from the manufacturer.

Why is ‘black box’ AI a problem in medicine?

‘Black box’ AI refers to systems whose decision-making process is opaque. In medicine, this is problematic because clinicians need to understand why a diagnosis or recommendation was made to properly verify it, explain it to patients, and maintain accountability. Without this transparency, trust and safety are compromised.

How does AI bias occur in diagnostic technology?

AI bias typically arises from the training data. If the datasets used to teach the AI are not diverse or representative of the general population (e.g., lacking data from certain ethnic groups, genders, or socioeconomic backgrounds), the AI will learn these biases and perform less accurately for underrepresented groups.

What are the implications for the future of health tech?

The FDA’s action signals a heightened focus on ethical AI development, transparency (Explainable AI – XAI), and diverse data sets. It implies that future health tech innovations will face stricter regulatory scrutiny regarding fairness and accountability, pushing developers towards more responsible practices.

Can AI in medicine ever be truly unbiased?

Achieving perfect unbiasedness is challenging, as human biases can be inadvertently coded into systems or data. However, continuous efforts in diverse data collection, rigorous testing for bias, and the development of XAI can significantly mitigate and reduce bias, striving for equitable performance across all patient groups.

What role do patients play in addressing AI bias?

Patients play a crucial role by demanding transparency, understanding their rights regarding data privacy, and advocating for equitable healthcare technologies. Their experiences and diverse data are essential for training better, more inclusive AI systems.

How will this affect investment in medical AI?

While some short-term caution might occur, this FDA action is likely to steer investment towards companies prioritizing ethical AI, XAI, and robust bias mitigation strategies. It will likely increase the demand for regulatory compliance expertise within health tech startups, ultimately leading to more trustworthy and sustainable innovation.

The recent pause by the FDA on a new AI diagnostic rollout is not a step backward for AI in medicine; it’s a necessary recalibration. It forces the industry to confront its responsibilities head-on, demanding that the incredible power of artificial intelligence be wielded with the utmost care and ethical consideration. The journey toward a truly intelligent and equitable health tech future will undoubtedly have its challenges. But by prioritizing transparency, diversity, and patient safety, we can ensure that these transformative technologies ultimately benefit every single person, creating a healthier world for us all.